2012 MARS, Colley, Massey and ODM Ratings After Round 23

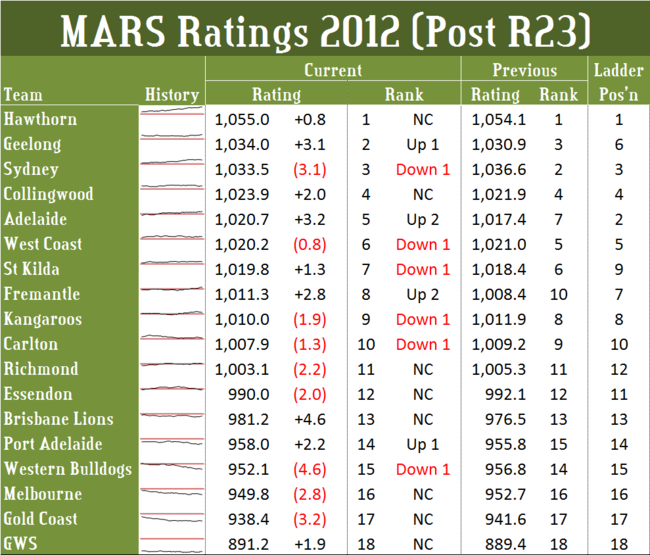

/For the second time in three weeks, MARS has seen fit to re-rank more than one half of the teams in the competition. This week it involved six of the teams participating in the Finals, leaving only Hawthorn in 1st and Collingwood in 4th unmoved.

Fremantle and Adelaide were the only big movers, each jumping two places, Fremantle into 8th and Adelaide into 6th.

Hawthorn enters the Finals with a MARS Rating about 7 Ratings Points (RPs) lower than Collingwood's when it entered the Finals last season but also about 2.5 RPs greater than Geelong's, the eventual winners. The Hawks' lead of 21 RPs over the next-highest rated team, Geelong, is, however, much greater than the lead the Pies enjoyed going into their 2011 campaign and is also, in the fact the greatest lead that a team has enjoyed on entering a Finals series since the Cats back in 2008 when they went in with a 31 RP lead over the Hawks.

Hawthorn enters the Finals with a MARS Rating about 7 Ratings Points (RPs) lower than Collingwood's when it entered the Finals last season but also about 2.5 RPs greater than Geelong's, the eventual winners. The Hawks' lead of 21 RPs over the next-highest rated team, Geelong, is, however, much greater than the lead the Pies enjoyed going into their 2011 campaign and is also, in the fact the greatest lead that a team has enjoyed on entering a Finals series since the Cats back in 2008 when they went in with a 31 RP lead over the Hawks.

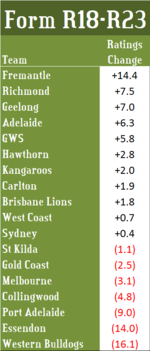

If momentum going into the Finals means anything (and, for the record, I don't think it does, but nonetheless) then Fremantle must be considered sterling chances to progress beyond next weekend. Over the last five rounds of the home-and-away season they accrued over 14 RPs, almost 7 RPs more than any other team.

If momentum going into the Finals means anything (and, for the record, I don't think it does, but nonetheless) then Fremantle must be considered sterling chances to progress beyond next weekend. Over the last five rounds of the home-and-away season they accrued over 14 RPs, almost 7 RPs more than any other team.

Next best was Richmond, for all the good it did them, who netted 7.5 RPs to finish with a Rating of 1,003.1, the highest Rating we've seen for a team finishing 12th on the ladder since Adelaide ended the home-and-away season of 2004 with a Rating of 1,004.9.

Amongst the teams competing in the Finals only Collingwood enters them having not increased its Rating over the past five rounds. Instead, the Pies shed almost 5 RPs in that period.

Only three teams surrendered a larger number of RPs: the Dogs, who gave up over 16 RPs, the Dons, who gave up 14 RPs, and Port Adelaide, who gave up 9 RPs.

Across the home-and-away season this year the average game saw 2.48 RPs change hands, which is down only a little on the average of 2.63 RPs recorded in 2011. Broadly and in aggregate, the dynamics of MARS Rating changes this year have been very similar to those of 2011, with about 30% of games resulting in 1.5 RPs or fewer being swapped, another 30% resulting in between 1.5 and 3 RPs changing hands, and the remaining 40% resulting in more than 3 RPs being exchanged. (For the statistically curious, the distribution of RP changes is somewhat positively skewed, the coefficient of skewness coming in at +0.50 in 2012, and less peaked than a Normal distribution, with an excess kurtosis of -0.62)

Whilst we're making comparisons to last year we should note that the interquartile Ratings difference (the gap in Rating between the teams ranked 4th and 15th) now stands at 71.8 RPs, a season high, and over 16.5 RPs higher than it was at the same point last season. That's the support for any argument that the competition is now chronically imbalanced.

The contrary evidence, which is good news as we enter the Finals, is that the gap between the teams ranked 4th and 8th is just 12.6 RPs, which is almost 15 RPs less than it was in 2011.

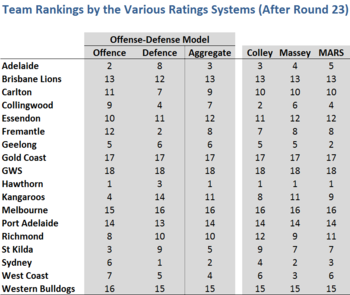

So, how do MARS' opinions of the various teams compare with those of Colley, Massey and ODM - and, for that matter, how do these Ratings Systems' opinions agree with each other?

As usual, the answer is, in general pretty well, but with higher levels of disagreement about a few teams in particular. If we define significant disagreement as being indicated when the difference between the highest and lowest Ranking for the same team across the Ratings Systems is 3 ladder positions or more, then there are only six teams about which there remains such high levels of disagreement:

- Collingwood, ranked 2nd by Colley, 4th by MARS, 6th by Massey and 7th by ODM

- Geelong, ranked 2nd by MARS, 5th by Colley and Massey, and 6th by ODM

- Kangaroos, ranked 8th by Colley, 9th by MARS, and 11th by Massey and ODM

- Richmond, ranked 9th by Massey, 10th by ODM, 11th by MARS, and 12th by Colley

- St Kilda, ranked 5th by ODM, 7th by Massey and MARS, and 9th by Colley

- West Coast, ranked 3rd by Massey, 4th by ODM, and 6th by Colley and MARS

ODM is at the extreme for four of those teams, and you'll recall that it provides Ratings and Rankings for all teams, separately, on the basis of their offensive and defensive performances.

Here are those latest Rankings:

ODM ranks Collingwood 4th on their defensive performances - a ranking that is more consistent with the other Rating Systems - but only 9th on their offensive skills, which drags them down to 7th overall.

ODM ranks Collingwood 4th on their defensive performances - a ranking that is more consistent with the other Rating Systems - but only 9th on their offensive skills, which drags them down to 7th overall.

Geelong it ranks 6th overall, which is only one place lower than Colley and Massey ranks them, and which is entirely due to ODM's ranking the Cats as on;y 6th on defensive performances.

The Roos' ODM ranking is dragged down by its 14th place ranking on defence, a full 10 places lower than its ranking on offence. This defensive ranking seems reasonable when you recognise that the Roos have conceded 2,097 points this season, the 6th-highest of any team.

St Kilda also have a large discrepancy between their ODM offensive and defensive rankings. They're 3rd on offence but 9th on defence, which is enough to have ODM ranking them 5th overall, a full two places higher than any other Rating System.

Three other teams' ODM rankings are notable for the large difference in their offensive and defensive rankings. Adelaide are ranked 2nd on offence but 8th on defence, Fremantle 12th on offence but 2nd on defence, and Sydney 6th on offence, but 1st on defence.

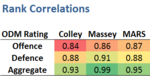

Though it's perhaps most interesting to focus on the differences amongst the Ratings Systems, we should also acknowledge the broad similarities, as quantified here by the Spearman rank correlation coefficients between ODM (in aggregate and for both of its constituent components) and the three other Rating Systems. Even the lowest correlation, which is between ODM's Offensive team rankings and Colley's overall team rankings, is +0.84.

Though it's perhaps most interesting to focus on the differences amongst the Ratings Systems, we should also acknowledge the broad similarities, as quantified here by the Spearman rank correlation coefficients between ODM (in aggregate and for both of its constituent components) and the three other Rating Systems. Even the lowest correlation, which is between ODM's Offensive team rankings and Colley's overall team rankings, is +0.84.

Across the course of the season, starting in Round 4, these differences in rankings, however slight they've been, have been enough to separate the Ratings Systems in terms of their predictive abilities. MARS finished up predicting the correct winner (based on selecting the team with the higher Rating) in 75% of contests, ODM in 72.5%, Massey in 71.3%, and Colley in 70.7%. By way of context, the TAB Bookmaker correctly predicted 78.1% of contests over this same period.