2011 MARS Ratings After Round 14

/Not just the usual one but two more Ratings systems to review this week (three if you consider the competition ladder to be a Ratings system too).

Firstly though, to MARS Ratings, where we find relatively little movement this week and all of it confined to teams ranked between 6th and 12th. West Coast and St Kilda swapped 6th and 7th, the Roos and the Dogs traded 8th and 9th, while Fremantle and Essendon mutually exchanged 11th and 12th.

Nine teams are now rated above 1000, up from a season low of 6 teams with such ratings only three weeks ago. There's also been a mild compression of the ratings, with the difference between the teams ranked 4th and 14th falling 2.5 Ratings Points to just under 41 Points.

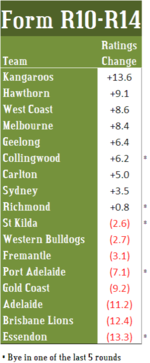

Momentum is still with the Roos, which picked up about another 3 Ratings Points this week to take its 5-week incremental tally to +13.6 Ratings Points.

Hawthorn, West Coast and Melbourne have also solidly accumulated Ratings Points over this same period, Hawthorn gaining just over 9 Ratings Points while remaining in 3rd, West Coast gaining just under 9 points and climbing from 9th to 6th, and Melbourne gaining almost 8.5 Ratings Points to climb from 12th to 10th.

Essendon, meantime, continues to shed Ratings Points, having dropped 13.3 Points across the last 5 rounds to see it now ranked 12th on the MARS ladder, 6 places lower than it found itself at the end of Round 10.

Other big losers across the last 5 rounds have been the Lions, down 12.4 Points but steady on the ladder in 15th; Adelaide, down 11.3 Points and 3 ladder positions to 14th; and the Gold Coast, down 9.2 Points and 1 ladder position with nowhere else to go in 17th.

This week, inspired by a paper sent to me by Andrew H that is summarised here, I've also calculated Colley and Massey ratings and rankings for the 17 teams.

I'll leave it to the curious reader to hunt down the details of these two rating systems and just summarise the key aspects here:

- Both the Colley and Massey Systems, like MARS, take into account "schedule strength" - in other words, they consider not just a team's results, but the opponents against which those results were achieved.

- Both Colley and Massey - at least as I've implemented them here - consider only the results for the current season. MARS implicitly includes teams' results from previous seasons by virtue of the fact that each team's initial Rating for the season is based partly on its Rating at the end of the previous season.

- The Colley System considers only results and ignores the margin of victory, so a 1-point win is as good as a 100-point win. In contrast, the Massey System, like MARS, considers the margin of victory, though, unlike MARS, it does not put a cap on the margin for any single game.

Duly crunched (and now crunched correctly for those who read the earlier version of this blog), the numbers look like this:

Broadly, the story's one of consensus though a few significant differences can be discerned for particular teams.

- Essendon shows a large variation in ranking across the Systems. The Massey System ranks them as high as 7th, while MARS now has them 12th. They're 10th on the competition ladder.

- The same can be said of Fremantle, which ranks 7th on the ladder and 12th on the Massey System.

- The Saints are also problematic. MARS has them 7th - partly due to the Ratings Points they carried over from last season - while the ladder has them in 12th. Colley and Massey both have them 9th, so there's tripartisan support for elevating the Saints relative to their ladder position, but they're on the fringe of the 8 no matter how you look at it.

- The only other teams about which there's more than a position or two's conjecture are the Roos, which are ranked 8th by MARS and 11th by the Colley System; Melbourne, which are ranked 7th by Colley and 10th by MARS; and the Dogs, which are ranked 9th by MARS and 13th by Colley, Massey and the competition ladder.

Two measures can be recruited in the service of measuring the level of agreement amongst the ratigns systems:

- The pairwise similarity of the rankings produced by all four systems - the competition ladder included - can be measured using the Spearman Rank Correlation Coefficient, which takes on a maximum value of 1 when the two judges being compared have identical rankings and which tends closer to zero as the level of agreement declines

- The pairwise similarity of the ratings produced by Colley, Massey and MARS can be measured by the correlation coefficient in the usual way.

Here are those measures of similarity:

The ranking correlations show that all four methods produce relatively similar rankings for all teams. Greatest similarity exists between the Colley rankings and the competition ladder - most likely because both focus (mainly in the case of the competition ladder) on outcomes instead of margins - and the greatest difference exists between the ladder and MARS rankings.

The ranking correlations show that all four methods produce relatively similar rankings for all teams. Greatest similarity exists between the Colley rankings and the competition ladder - most likely because both focus (mainly in the case of the competition ladder) on outcomes instead of margins - and the greatest difference exists between the ladder and MARS rankings.

Turning instead to the team ratings produced by Colley, Massey and MARS, we find pairwise correlations all of around +0.95 signifying a very high level of agreement.

We'll dip into these alternative ratings methods again over the course of future weeks to see if the level of divergence increases.