Assessing Probability Forecasts: Beyond Brier and Log Probability Scores

/Einstein once said that "No problem can be solved from the same level of consciousness that created it". In a similar spirit - but with, regrettably and demonstrably, a mere fraction of the intellect - I find that there's something deeply satisfying about discovering that an approach to a problem you've been using can be characterised as a special case of a more general approach.

So it is with the methods I've been using to assess the quality of probability forecasts for binary outcomes (ie a team's winning or losing) where I have, at different times, called upon the services of the Brier Score and the Log Probability Score.

Both of these scores are special members of the Beta Family of Proper Scoring Rules (nicely described and discussed in this paper by Merkle and Steyvers), which are defined in terms of loss functions as follows:

In these equations, l is the loss and fi is the probability assigned to the outcome that eventuated.

Setting α and β equal to 0 in this formulation yields a version of the log probability score, while setting both parameters equal to 1 yields the Brier Score.

Other combinations of values of α and β are also permissible as long as both are greater than -1, so we can use this formulation to create an infinite number of alternative scoring methods.

In the table below I've considered values of α and β in the range 0 to 30 and assessed the probability scores over the period 2006 to 2013 for the Overround-Equalising, Risk-Equalising and Log-Probability Score Optimising approaches.

To perform these assessments I've used the R scoring package, which is maintained by Ed Merkle, one of the authors of the paper I alluded to earlier.

For each combination of α and β values I've colour-coded the relevant cell in the table to indicate which of the three approaches to creating implicit home team probabilities yielded the superior probability score.

The LPSO methodology generates the best probability score for a significant portion of the values of α and β considered, including those associated with the Brier and Log Probability Scores.

The Overround-Equalising approach is superior for the next-largest proportion of considered parameter values and, generally, for similar values of α and β when both are larger than 2.

The Risk-Equalising approach is only occasionally superior and for combinations of values of α and β in quite narrow bands.

INTERPRETATION AND PRACTICAL IMPLICATIONS

The Merkle and Steyvers paper explains why we might prefer for a particular use, a Scoring Rule with combinations of values of α and β different from those that produce the Brier and Log Probability scores.

As they explain, scoring rules with α < β emphasise low-probability forecasts. In the current context where I'm focussing on home team probability forecasts, such a scoring rule would heavily penalise forecasters who attached a low probability to a home team victory that occurred, while not especially highly rewarding or penalising those who attached a high probability to a home team victory, regardless of the outcome.

Conversely, scoring rules with α > β emphasise high-probability home team forecasts such that forecasters who attach a high probability to a home team victory that does not occur are heavily penalised, while those who attach a low probability to a home team victory are not especially rewarded or penalised regardless of the outcome.

Merkle and Steyvers present another way of thinking about α and β, which is that α/(α+β) is like the cost of a false-positive (ie wrongly predicting a home team victory), and that 1-α/(α+β) is like the cost of a false-negative (ie wrongly predicting a home-team loss).

Given all of this information, it might seem at first odd that the LPSO probabilities are superior in regions where α > β and also where α < β. This result comes about, however, partly because of the particular calibration properties of the LPSO probabilities relative to the Risk-Equalising and Overround-Equalising probabilities. Specifically, LPSO probabilities are well-calibrated for high-probability and for low-probability forecasts, but less so for medium-probability forecasts, as the table below reveals.

Each row of this table relates to games in which a particular probability forecaster has assigned a home team probability within the given range. The first row, for example, provides the results for all games where the home team was assigned a victory probability of less than 40%. For games where this was true of the Overround Equalising probabilities, home teams actually won at a 28% rate. This compares with an average probability assigned to these teams of 26%, making the average absolute difference between the assessed probability and actual winning rate - the average calibration error - equal to 2.7%.

The LPSO probabilities have the smallest calibration error for games in this under 40% probability range, but they also have the smallest calibration error - much smaller, in fact - for games where the home team probability is assessed as being 80% or higher.

The Overround Equalising probabilities are best calibrated in the 40% to under 80% range, but are also quite well-calibrated for games with home team probabilities in the under 40% range. They're least well-calibrated in the 80% and above region, which is why the LPSO and Risk-Equalising probabilities are superior to the Overround-Equalising probabilities when α is much greater than β, making the cost of a false-positive forecast much higher.

CONCLUSION

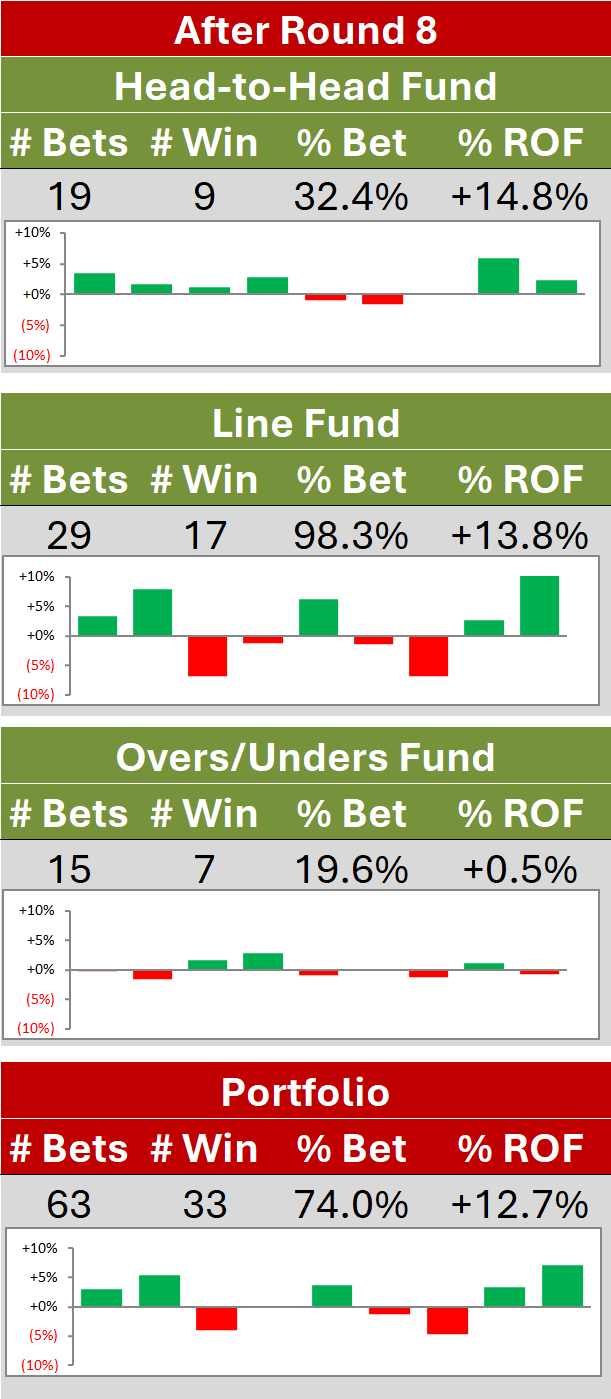

It's conceivable that, in a wagering context, the impact of forecasting errors might be asymmetric with respect to some outcome. The MatterOfStats funds themselves are good examples, as they wager only on home teams and only within a given price range.

More generally, I can envisage other forecasting situations where the Brier and Log Probability Scores won't necessarily be the best indicators of a probability forecaster's practical value because of the differential implications of false-positives and false-negatives. In these situations, choosing a different member of the Beta Family of Proper Scoring Rules might be an appropriate choice.