Current Teams' All-Time MARS Rankings

/I've looked previously at the best and worst AFL teams of all time and, whilst none of the current crop of teams is vying for either of those honours as at the end of Round 11 in the 2013 season, two (GWS and Melbourne) are in the 30 lowest-rated teams ever and one (Hawthorn) is in the 50 highest-rated teams ever.

For the purposes of today's blog I'm going to consider a team to be defined, a bit like graduates, based on the season in which they played. So, I can talk meaningfully about the Hawthorn 2012 team, the Hawthorn 2013 team, the Sydney 2001 team, and so on, treating each as a separate team. Defined in this way there have been 1,406 teams that have played VFL/AFL football across the seasons since 1897.

A site search on MAFL will provide you with a great deal of information about the MARS Rating System used here on MAFL, and reading the blog post linked in the first paragraph will give you the details of how I came up with the all-time Ratings. A couple of features of MARS Ratings that are particularly relevant to today's blog are that:

- the average team has a Rating of 1,000

- teams carry across about one half of the difference between their end-of-season Rating and 1,000 into the next season

The second point is important because it means that, for example, the Hawthorn 2013 team benefits (or suffers) to some extent from the performance of the Hawthorn 2012 team. Thus, many of the highest- and lowest-rated teams have at least partly achieved their Rating thanks to the teams of the same name that went before them.

That said, the best-of-the-best and the worst-of-the-worst virtually all have records for the season in question fully justifying their extreme Rating.

Let me show you what I mean.

TEAMS WITH ALL-TIME LOW MARS RATINGS

We'll start with the under-performers - those teams that have registered the lowest ever MARS Ratings.

First amongst them is the Fitzroy 1996 team, the last AFL team to carry the name 'Fitzroy'. They achieved a low Rating of just under 914, which is over 4 standard deviations below the Rating of the Average team, and they did so in a season in which they played only 22 games.

Their highest Rating in that year was 966.5, so they're a good example of a team whose Rating suffered, to at least some degree, from the Rating of their antecedents. That said, their own 1 and 21 record and 49.5 percentage for the 1996 season would seem to justify a fairly lowly Rating regardless.

Next-lowest Rated was the Sydney 1993 team, which finished the season 1 and 19 with a 63 percentage, followed by the Fitzroy 1995 team, which went 2 and 20 and recorded a 58 percentage.

I've highlighted in grey a few of the teams from recent seasons that appear amongst the lowest 30 teams of all time. Gold Coast and GWS have made two appearances each, though I'd note that the Gold Coast 2013 team is, for the time being, quite safely off this list.

The Melbourne 2013 team also makes an appearance, though it only just scrapes into 27th. There is though a long way to go in the season ...

St Kilda teams have the unwelcome record of having appeared most often in the table. Seven of them from four different decades are scattered through the list.

That's more than enough dwelling on the depressing end of the ladder. Let's move to the other end.

TEAMS WITH ALL-TIME HIGH MARS RATINGS

I've included the Top 60 all-time Rated teams in the table below for a couple of reasons. One because we're looking at the more cheerful end of the ladder so I don't mind wallowing a little, and two because there are a number of teams in positions 31 to 60 about which current readers may have first-hand viewing experience.

Top of the tree is the Essendon 2000 team, which is the only team in MARS history to record a Rating over 1,070. Oddly, they are the only team amongst the top 3 on this list that won the Premiership in the year of their Ratings supremacy.

In fact, amongst the top 8 teams, only half won the Premiership in the year in which MARS reckons they dominated.

Across the top 30 teams there are 18 Premiership winners, 10 losing Grand Finalists, and just 2 teams that bowed out as Preliminary Finalists: the Geelong 2010 team and the Adelaide 2006 team.

That record of achievement in Finals underscores the quality of the teams in this list.

As I mentioned earlier, a number of teams from more-recent times are scattered throughout positions 31 to 60 in this table. There are eight teams from the period commencing in 2000, six from the 1990s, and six more the 1980s. Whether this reflects a heightened incidence of stand-out teams in the modern era or merely the lengthening of the seasons affording greater opportunity for talented teams to accumulate Ratings Points, is an open question.

BIGGEST MOVERS

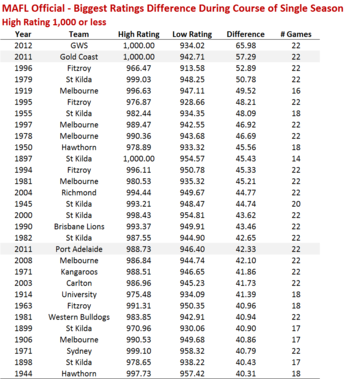

One way to remove, to some extent, the effect of teams' carry-over Ratings from one season to the next is to rank teams on the basis of the difference between their high and low Ratings within the same season. We can then look at teams whose highest Rating in a season was less than 1,000 (who, presumably, were mostly falling during the season) and teams whose highest Rating in a season was 1,000 or higher.

First, here's the all-time list of teams with the greatest intra-seasonal Rating change whose highest Rating in the season was under 1,000.

A number of the teams at the top of this list also appear in the earlier list of the 30 all-time lowest Rated teams, but once we move down beyond about the first dozen we find instead teams that have registered dramatically poor single season results.

Most of them come into their respective seasons rated under 1,000, but they all have managed to shed 40 Ratings Points or more in that single season.

Only three teams from the current decade have achieved this feat: the GWS 2012, Gold Coast 2011 and Port Adelaide 2011 teams.

From the previous decade, the Richmond 2004, St Kilda 2000, Melbourne 2008, and Carlton 2003 teams have also added themselves to the list.

The predominance of teams that have played in 22-game seasons on this list again suggests that it's 'time on the park' that has some part to play in allowing teams to achieve extreme results, good or bad.

With that in mind, the roughly 50 Ratings Point decline of the Melbourne 1919 team in a 16-game season, and the 45 Ratings Point decline of the St Kilda 1897 team in a 14-game season, seem all the more remarkable.

Turning next to teams recording the largest single season Ratings changes where the maximum Rating they achieved was 1,000 or more, I offer the following table.

Again, it's only the teams at the very top of the list that are familiar to us from the earlier all-time highest Rated teams, and that excludes the team at the very top, the Brisbane Lions 1999 team, which started that season 16 Ratings Points below an average team and so was unable to top the 1,048.4 Rating Point threshold required to sneak into the top 30 all-time teams, despite adding an incredible 60 Ratings Points during the course of the season.

After that there are a number of teams that must have been having what the coaching staff would undoubtedly have labelled "rebuilding" years as the team managed to drag a below-average Rating up to an average or, in some cases, well-above average Rating.

Five teams on this list are from the current decade, and 11 more are from the decade prior. Only two teams on the list played fewer than 22 games in the season in which they recorded the significant Ratings improvement: the University 1911 team, which played only 18 games, and the Collingwood 1929 team, which played only 20. All four of the top teams in the list played 25 or 26 games in their stand-out seasons.

I'll finish this section of the blog by providing the full list of Ratings for teams from the last eight seasons.

It's an interesting exercise to cast your eye down the Rank column to assess the overall strength of the competition in each season. In 2010 and 2011, for example, three teams from the 70 best all-time teams ran around, where in 2007 we had the 10th-best all-time team in the Cats but then a chasm to the next-best team, Sydney, who rank 278th.

ZERO CARRYOVER MARS RATINGS

Another way to assess teams' performances independent of the teams of the same name that went before them is to reset every team's Rating to 1,000 at the start of each season.

This produces a new candidate list of worst-ever teams.

Though the ordering is different, the composition of this list of 30 teams is very similar to the earlier list containing the all-time low Ratings based on the Official MARS Ratings.

Amongst the first 20 teams, 18 are from the earlier list; amongst the first 25 teams, 21 are in common; and amongst all 30, 22 are in common.

We can fairly say then, I think, that while Rating carryover from one season to the next might alter a team's all-time ranking at the margin, it rarely propels a poorly performed team particularly far up or down the all-time ladder.

For example, the first two teams on this list are the same two teams that appeared atop the earlier list, albeit in the reverse order, and the next four teams on the list come from the top 10 on the earlier list.

Much the same claim can be made in relation to the list of the top 60 all-time highest rated teams, whether we choose to use the Official MARS Ratings or the Ratings that we can derive by setting the season-to-season carryover to 0.

In fact, if anything, the claim we can make is even stronger, since nine of the top 10 teams on the list created using the zero-carryover approach are the same as the top 10 teams we saw on the earlier list of Official Ratings.

Further, amongst the top 20 here, 19 are common; amongst the top 25, 23 are common; and amongst the top 30, 24 are common.

For completeness' sake, here are the same lists as I provided earlier based on the Official MARS Ratings, now based on the zero-carryover approach.

Firstly, here are the all-time greatest single season movers, low and high.

Again we find a high level of representation for teams from the modern era in both lists, though especially amongst the teams making large single season gains.

Lastly, here are the Ratings for teams for recent seasons.