Another Day, Another Model

/In the previous blog I developed models for predicting victory margins and found that the selection of a 'best' model depended on the criterion used to measure performance.

This blog I'll review the models that we developed and then describe how I created another model, this one designed to predict line betting winners.

The Low Average Margin Predictor

The model that produced the lowest mean absolute prediction error MAPE was constructed by combining the predictions of two other models. One of the constituent models - which I collectively called floating window models - looked only at the victory margins and bookie's home team prices for the last 22 rounds, and the other constituent model looked at the same data but only for the most recent 35 rounds.

On their own neither of these two models produce especially small MAPEs, but optimally combined they produce an overall model with a 28.999 MAPE across seasons 2008 and 2009 (I know that the three decimal places is far more precision than is warranted, but any rounding's going to nudge it up to 29 which just doesn't have the same ability to impress. I consider it my nod to the retailing industry, which persists in believing that price proximity is not perceived linearly and so, for example, that a computer priced at $999 will be thought meaningfully cheaper than one priced at $1,000).

Those optimal weightings were created in the overall model by calculating the linear combination of the underlying models that would have performed best over the most recent 26 weeks of the competition, and then using those weights for the current week's predictions. These weights will change from week to week as one model or the other tends to perform better at predicting victory margins; that is what gives this model its predictive chops.

This low MAPE model henceforth I shall call the Low Average Margin Predictor (or LAMP, for brevity).

The Half Amazing Margin Predictor

Another model we considered produced margin predictions with a very low median absolute prediction error. It was similar to the LAMP but used four rather than two underlying models: the 19-, 36-, 39- and 52-round floating window models.

It boasted a 22.54 point median absolute prediction error over seasons 2008 and 2009, and its predictions have been within 4 goals of the actual victory margin in a tick over 52% of games. What destroys its mean absolute prediction error is its tendency to produce victory margin predictions that are about as close to the actual result as calcium carbonate is to coagulated milk curd. About once every two-and-a-half rounds one of its predictions will prove to be 12 goals or more distant from the actual game result.

Still, its median absolute prediction error is truly remarkable, which in essence means that its predictions are amazing about half the time, so I shall name it the Half Amazing Margin Predictor (or HAMP, for brevity).

In their own highly specialised ways, LAMP and HAMP are impressive but, like left-handed chess players, their particular specialities don't appear to provide them with any exploitable advantage. To be fair, TAB Sportsbet does field markets on victory margins and it might eventually prove that LAMP or HAMP can be used to make money on these markets, but I don't have the historical data to test this now. I do, however, have line market data that enables me to assess LAMP's and HAMP's ability to make money on this market, and they exhibit no such ability. Being good at predicting margins is different from being good at predicting handicap-adjusted margins.

Nonetheless, I'll be publishing LAMP's and HAMP's margin predictions this season.

HELP, I Need Another Model

Well if we want a model that predicts line market winners we really should build a dedicated model for this, and that's what I'll describe next.

The type of model that we'll build is called a binary logit. These can be used to fit a model to any phenomenon that is binary - that is, two-valued - in nature. You could, for example, fit one to model the characteristics of people who do or don't respond to a marketing campaign. In that case, the binary variable is campaign response. You could also, as I'll do here, fit a binary logit to model the relationship between home team price and whether or not the home team wins on line betting.

Fitting and interpreting such models is a bit more complicated than fitting and interpreting models fitted using the ordinary least squares method, which we covered in the previous blog. For this reason I'll not go into the details of the modelling here. Conceptually though all we're doing is fitting an equation that relates the Home team's head-to-head price with its probability of winning on line betting.

For this modelling exercise I have again created 47 floating window models of the sort I've just described, one model that uses price and line betting result data only the last 6 rounds, another that use the same data for the last 7 rounds, and so on up to one that uses data from the last 52 rounds.

Then, as I did in creating HAMP and LAMP, I looked for the combination of floating window models that best predicts winning line bet teams.

The overall model I found to perform best combines 24 of the 47 floating window models - I'll spare you the Lotto-like list of those models' numbers here. In 2008 this model predicted the line betting winner 57% of the time and in 2009 it predicted 64% of such winners. Combined, that gives it a 61% average across the two seasons. I'll call this model the Highly Evolved Line Predictor (or HELP), the 'highly evolved' part of the name in recognition of the fact that it was selected because of its fitness in predicting line betting winners in the environment that prevailed across the 2008 and 2009 seasons.

Whether HELP will thrive in the new environment of the 2010 season will be interesting to watch, as indeed will be the performance of LAMP and HAMP.

In my previous post I drew the distinction between fitting a model and using it to predict the future and explained that a model can be a good fit to existing data but turn out to be a poor predictor. In that context I mentioned the common statistical practice of fitting a model to one set of data and then measuring its predictive ability on a different set.

HAMP, LAMP and HELP are somewhat odd models in this respect. Certainly, when I've used them to predict they're predicting for games that weren't used in the creation of any of their underlying floating window models. So that's a tick.

They are, however, fitted models in that I generated a large number of potential LAMPs, HAMPs and HELPs, each using a different set of the available floating window models, and then selected those models which best predicted the results of the 2008 and 2009 seasons. Accordingly, it could well be that the superior performance of each of these models can be put down to chance, in which case we'll find that their performances in 2010 will drop to far less impressive levels.

We won't know whether or not we're witnessing such a decline until some way into the coming season but in the meantime we can ponder the basis on which we might justify asserting that the models are not mere chimera.

Recall that each of the floating window models use as predictive variables nothing more than the price of the Home team. The convoluted process of combining different floating window models with time-varying weights for each means that, in essence, the predictions of HAMP, LAMP and HELP are all just sophisticated transformations of one number: the Home team price for the relevant game.

So, for HAMP, LAMP and HELP to be considered anything other than statistical flukes it needs to be the case that:

- the TAB Sportsbet bookie's Home team prices are reliable indicators of Home teams' victory margins and line betting success

- the association between Home team prices and victory margins, and between Home team prices and line betting outcomes varies in a consistent manner over time

- HAMP, LAMP and HELP are constructed in such a way as to effectively model these time-varying relationships

On balance I'd have to say that these conditions are unlikely to be met. Absent the experience gained from running these models live during a fresh season then, there's no way I'd be risking money on any of these models.

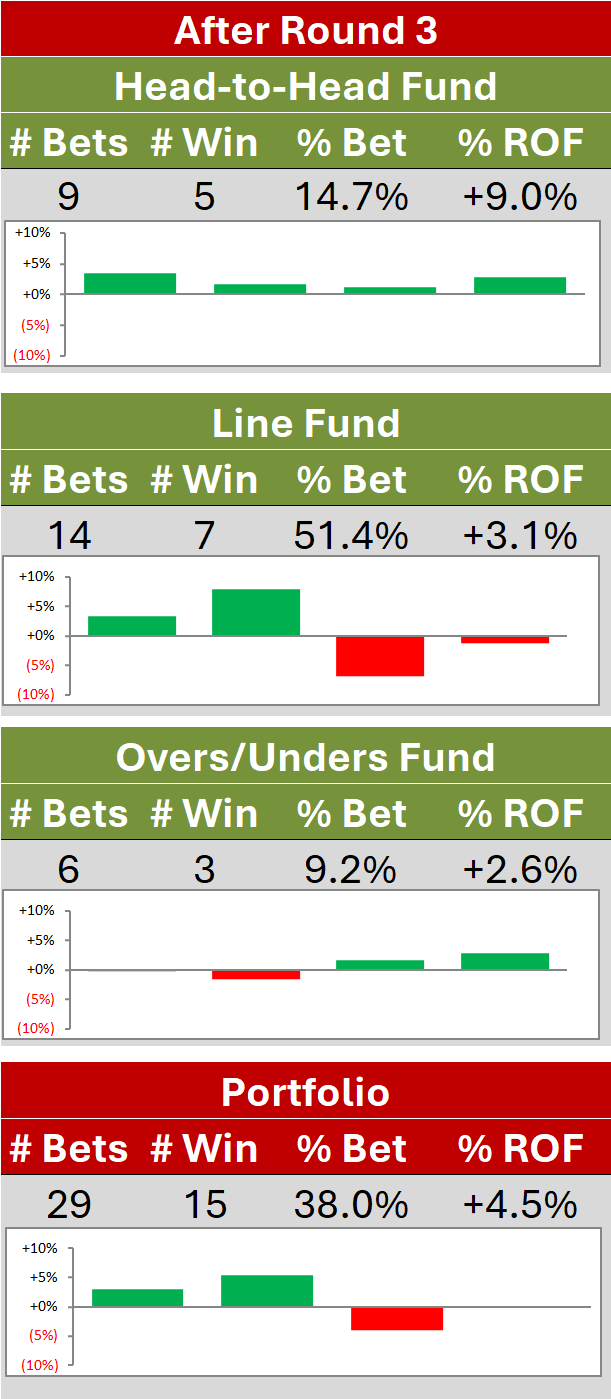

Many of the algorithms that support MAFL Funds have been developed in much the same way as I've described in this and the previous blog, though each of them is based on more than a single predictive variable and most of them have been shown to be profitable in testing using previous seasons' data and in real-world wagering.

Regardless, a few seasons of profitability doesn't rule out the possibility that any or all of the MAFL Fund algorithms haven't just been extremely lucky.

That's why I'm not retired ...